In the rapidly evolving technological era, data privacy has emerged as a significant concern among customers when engaging with organizations. According to the “Cisco 2024 Data Privacy Benchmark Study”, a whopping 94% of organizations acknowledge their customers would refrain from making purchases if their data is not adequately protected. Customers now even seek tangible evidence, such as external privacy certifications, to validate an organization’s trustworthiness in managing data privacy.

The heightened concern for data privacy becomes particularly pronounced in the era of Artificial Intelligence (AI). A notable 62% of consumers express apprehension about how organizations implement and use AI, with 60% having already lost trust in organizations due to their AI practices. In response to these concerns, a staggering 91% of organizations acknowledge the need to take additional measures to reassure customers that their data is being utilized solely for intended and legitimate purposes in AI applications.

The “Cisco 2024 Data Privacy Benchmark Study” gathered insights from 2,600 security and privacy professionals across 12 countries, including 5 in Europe, 4 in Asia, and 3 in the Americas.

Data Security Concerns With GenAI

Generative AI (GenAI) applications, such as ChatGPT, Bard, etc., harness the capabilities of AI and machine learning technologies to create content based on user prompts such as text, sound, music, images, video, and code. However, this power is not without its challenges, especially when users lack a full understanding or cautious use of AI.

Top concerns that the use of GenAI could hurt the organizations include potential harm to legal and intellectual property rights (IP) (69%), sharing information publicly or with competitors (68%), and the possibility of incorrect output (68%). Addressing these concerns requires innovative approaches to data and risk management.

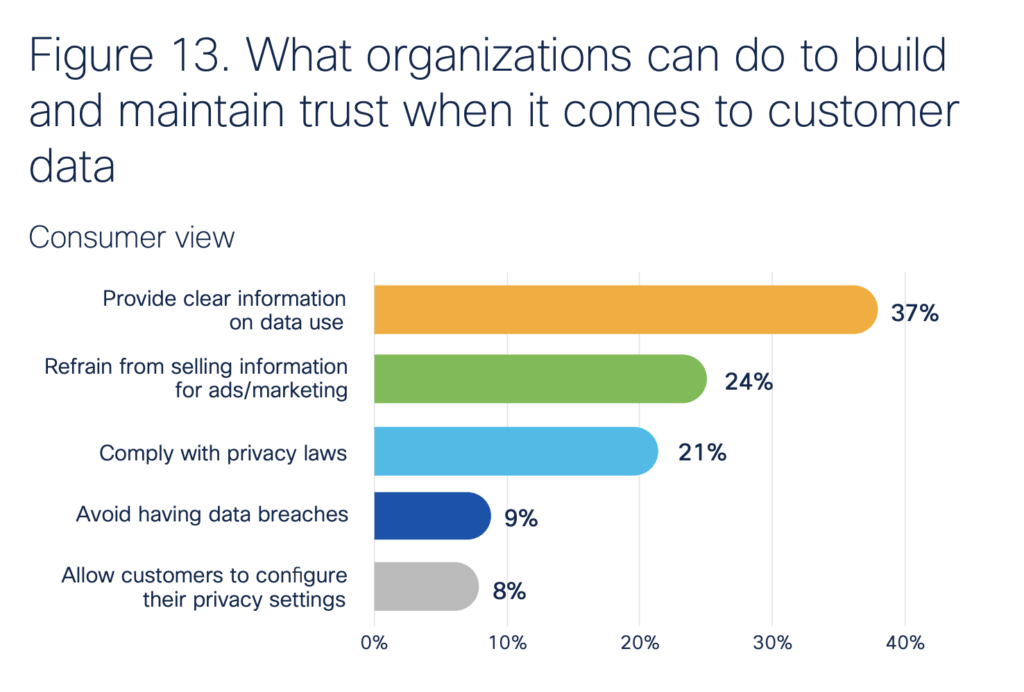

A notable challenge in establishing trust with data lies in the discrepancy between customer priorities and organizational objectives. Consumers prioritize clear information on data usage (37%) and protection from data being sold for marketing purposes (24%), while organizations place greater emphasis on privacy law compliance (25%) and the prevention of data breaches (23%). Although companies’ objectives are crucial, it underscores the need for additional focus on transparency, particularly with AI applications where understanding the decision-making process of AI algorithms can be challenging for customers.

Controls on GenAI Information Entry

As an increasing number of global organizations leverage the capabilities of GenAI, the challenge of controlling the information entered into these applications becomes increasingly critical. Survey results reveal that 62% of respondents have entered details about their companies internal processes, 48% have input non-public information related to the company, and 45% have provided employee names or information – all of which could pose significant risks if shared externally. To address these data leak challenges, organizations are implementing controls such as limitations on data entry (63%), restrictions on GenAI tool usage by employees (61%), and, in some cases, outright bans on GenAI applications (27%). Nearly all organizations reported having at least one of these controls in place.

As AI technology rapidly evolves, organizations worldwide will continue to adapt and refine the types and nature of controls in place. This ongoing evolution is driven by the imperative to safeguard against the unauthorized sharing of confidential or personal information, reflecting the dynamic landscape of AI and its intersection with data privacy and security concerns.