The explosive surge in social media usage has brought about an alarming rise in fake accounts and spam content, including explicit adult content and sexual activity. Yet, amidst this digital chaos, Meta Platforms, Inc. (NASDAQ: META) has boldly stepped forward to take action against the audacious violators who dared to defy the policies of Facebook and Instagram. A recently released “Community Standards Enforcement Report” for Q1 2023 sheds light on the mind-boggling actions undertaken to make Facebook and Instagram a safe and joyful social platform to connect with friends, family and acquaintances worldwide.

Both social media platforms share the same content policies as it’s owned by the same company, i.e. Meta Platforms.

Fake Accounts On Facebook, Instagram

Meta has been cracking the increasing number of fake accounts and spam posts on Facebook and Instagram for almost a decade. The social media giant’s sole purpose is to provide the best possible user experience to every individual worldwide.

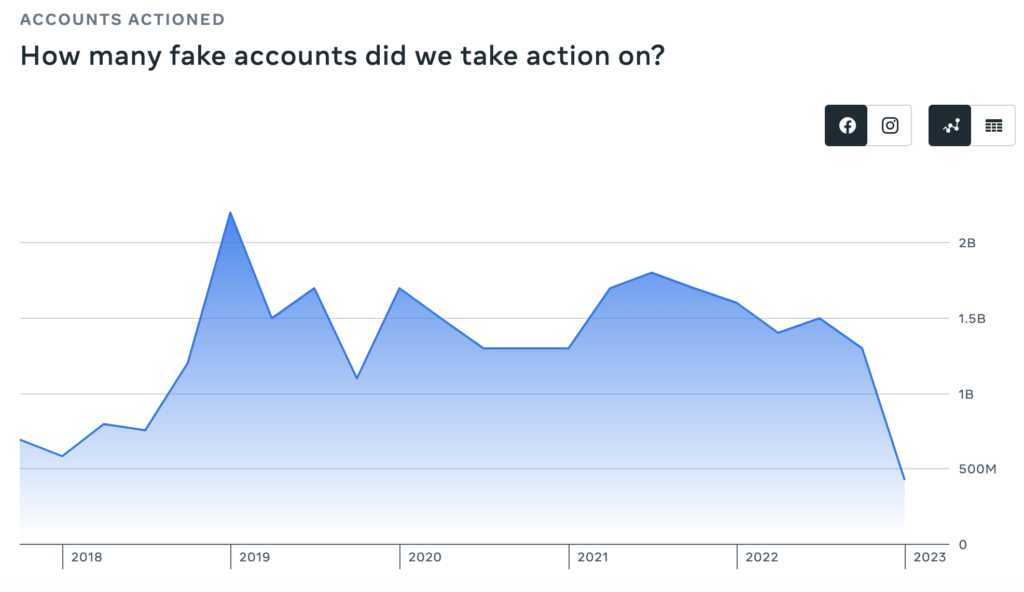

Facebook took action against approximately 426 million fake accounts in Q1 2023. Surprisingly, this is the lowest number of fake accounts addressed by the company in the past five years.

When we examine quarterly and yearly trends, it becomes evident that there has been a significant decline. The number of fake accounts disabled on Facebook declined 67.2% QoQ and 73.4% YoY during the first quarter of 2023. These data illustrate the fruitful outcomes of the company’s dedicated efforts to combat hackers, scammers, and other fraudsters.

An impressive 98.7% of the violating accounts that Meta took action against in Q1 2023 were identified and dealt with by the company itself. This highlights Meta’s proactive approach to detecting and addressing accounts that violate their policies. The remaining percentage of reported violating accounts was brought to Meta’s attention by vigilant users on Facebook.

The collaboration between the social media giant and its users is crucial in ensuring the enforcement of community standards and maintaining a safe and accountable online environment.

It is worth mentioning that fake accounts represent approximately 4%-5% of the total worldwide monthly active users (MAUs) on Facebook.

There is no data available for Instagram.

Spam Content on Facebook

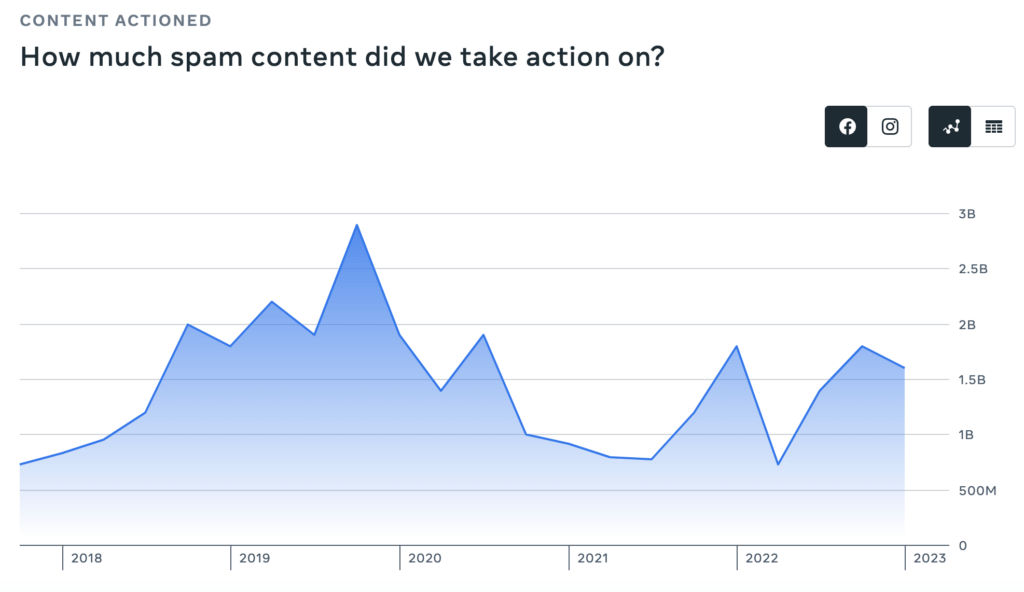

Meta has been actively taking action against spam content on Facebook, which includes images, videos, text, and comments. This type of content often originates from automated sources, including bots or scripts, or from a person who uses multiple accounts to spread deceptive content and misleading information.

Meta removed approximately 1.6 billion spam content on Facebook in Q1 2023, a decline from 1.8 billion in both Q4 2022 and Q1 2022.

It’s worth noting that the holiday quarter of 2019 witnessed the highest surge in spam content on Facebook, with a whopping 2.9 billion pieces of content being removed from the platform. It seems scammers and spammers were extra active during the festive season, and Meta was quick to combat their malicious attempts.

But it’s not just about removal; Meta understands the importance of striking the right balance. The company restores content that is incorrectly removed or when circumstances change. Restores can happen from users’ appeals or when the company identify issues itself.

Q1 2023 witnessed a remarkable surge in the restoration of content on Facebook that was previously flagged as spam. During the quarter, a whopping 103 million pieces of content were successfully brought back to the platform, compared to only 50.6 million in Q4 2022 and 33 million in Q1 2022. This represents a massive 103.6% QoQ and 212.1% YoY increase in restored content on Facebook during the first quarter of 2023.

So, what contributed to this significant upswing in content restoration?

Two key factors played pivotal roles. Firstly, the deprecation of violating links in content enabled Meta to identify and rectify mistakenly removed content with higher accuracy. This improvement ensured that legitimate content wasn’t caught in the crossfire of spam detection. Secondly, changes to enforcement policies refined the approach to content restoration, ensuring that valuable and lawful content is promptly restored to the platform.

There is no data available for Instagram.

Adult Nudity And Sexual Activity On Facebook, Instagram

In Q1 2023, there was an increase in the prevalence of adult nudity and sexual activity on Facebook, reaching approximately 0.09%. This percentage rose from 0.06% in Q4 2022 and 0.04% in Q1 2022. This surge was primarily due to an increase in the number of sexually suggestive videos that went viral between January and March.

To provide a clearer perspective, approximately 3 out of every 10,000 pieces of content viewed on Facebook in Q1 2023 contained adult nudity and sexual activity.

It is worth noting that the highest prevalence of such content was observed in Q1 2019, ranging from 0.12% to 0.14%. This means that, on average, between 12 to 14 out of every 10,000 content views violated Facebook’s standards for adult nudity and sexual activity during that period.

In addition, the prevalence of adult nudity and sexual activity on Instagram has remained relatively stable in the last 24 months, hovering around 0.03%. This percentage is consistent with the figures observed in Q4 2022 (0.03%-0.04%) and Q1 2022 (0.02%-0.03%).

In other words, approximately 3 out of every 10,000 pieces of content viewed on Instagram in Q1 2023 contained adult nudity and sexual activity.

It’s important to consider that a piece of violating content can be published once but viewed numerous times, potentially reaching thousands or even millions of viewers. Therefore, measuring the views of violating content, rather than simply the amount of published violating content, provides a more accurate reflection of its impact on the community.

The prevalence is the estimated number of views that showed violating content, divided by the estimated number of total content views on the platform i.e. Facebook or Instagram.

Now the question arises, what measures is Meta implementing to address and combat nudity-related posts or comments shared by users on Facebook and Instagram?

In Q1 2023, Meta removed 38.6 million pieces of content (such as posts, photos, videos or comments) or accounts, involving adult nudity and sexual activity on Facebook. This demonstrates a substantial increase from 29.1 million in Q4 2022 and 31 million in Q1 2022, indicating a noteworthy 24.5% Y-o-Y and 32.6% Q-o-Q growth in content actioned during Q1 2023.

It is worth noting that the highest number of content involved in adult nudity and sexual activity on Facebook was reported in Q1 2020, with a count of 39.5 million.

In contrast to Facebook, Instagram experienced a lower likelihood of policy violations within its content. In Q1 2023, Instagram took action on approximately 11.7 million pieces of content related to adult nudity and sexual activity, marking the highest number of such actions in the last two years. This represents a 12.5% YoY and an 8.3% QoQ increase. The data suggest that during the period between January and March, there was an uptick in the sharing of explicit videos and photos containing nudity by spam actors on the platform.

CEO Mark Zuckerberg spares no effort in ensuring the vibrancy and involvement of billions of users on Facebook and Instagram. By diligently cracking down on the prevalence of fake accounts and spam content, he aims to cultivate a social media environment that is not only gratifying but also deserving of the valuable time users invest in these platforms. By achieving this goal, Zuckerberg not only seeks to extend users’ duration of engagement but also endeavours to entice back those who had previously sought a more valuable and gratifying experience elsewhere, thereby revitalizing their interest in the platforms.