At a time when regulatory bodies are calling out online platforms for their inability to moderate content and curb misinformation, Youtube is a step ahead of its peers!

The Google-owned popular video sharing platform wants the entire world to know it is doing a great job of enforcing its own set of moderation rules. According to the company, now a shrinking number of their users come across problematic content on their site. A sizeable number of videos that include hate speech, scams, graphic violence are speedily taken down.

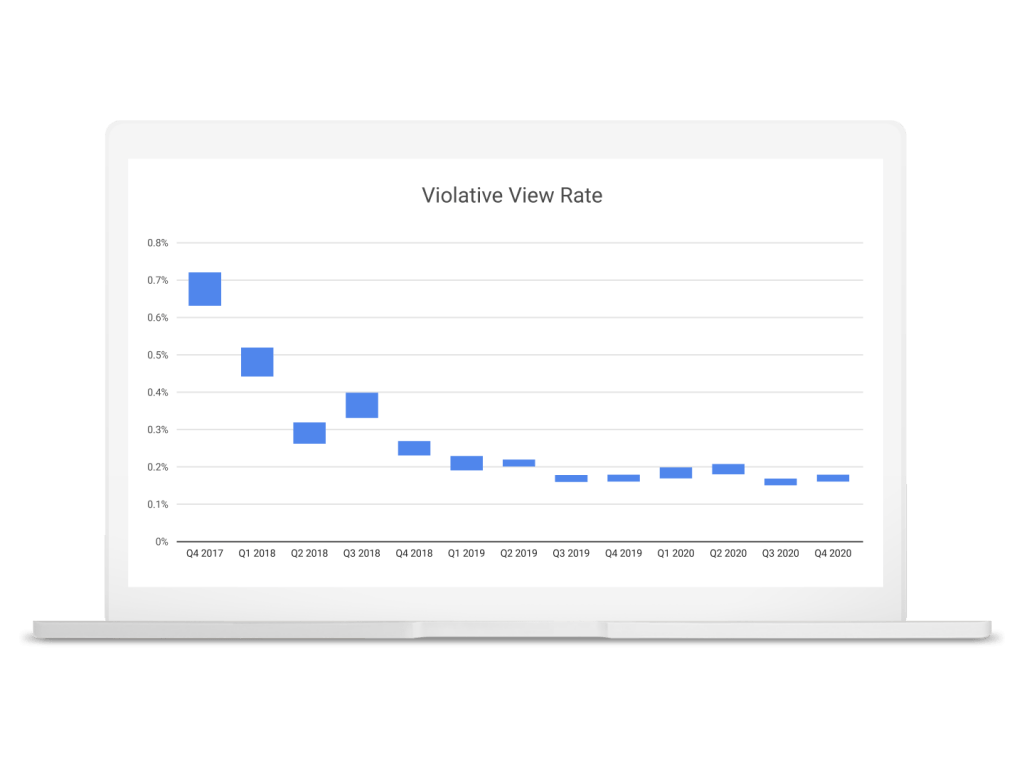

In Q4 2020, only 18 out of every 10,000 views on Youtube were on videos that go against the company’s policies, a considerable improvement compared to Q4 2017, wherein the views on problematic videos stood at 72 out of every 10,000 views.

But that being said, there still exists room for error in Youtube’s calculation. Why?

While there is no doubt that the platform is successful in accurately measuring how well they are able to moderate the content, it is ultimately based on what Youtube believes to be removed from its platform. And this has let some troubling videos bypass their radar and stay up on the platform.

The new figure, which states the views on problematic videos, is being dubbed as ‘violative view rate’ aka VVR by Youtube. It is the latest addition to the company’s community guidelines enforcement report.

Youtube has established VVR as a key internal metric to understand and evaluate their own performance when it comes to effective moderation. Basically, a higher VVR would suggest Youtube is lagging behind to curb problematic videos on its platform, whilst a lower VVR will mean the company is acing the job!

You can observe that VRR has undergone a steep drop from 2017 to 2018 before stabilizing from thereon in the above chart. This came after Youtube primarily started to rely on machine learning to spot troubling videos instead of solely relying on users to report issues. Jennifer O’Connor, Product Director for Trust and Safety at Youtube, said that the company goal is to get this number as close to zero as possible.

Note here that VRR doesn’t include the videos that go against Youtube’s advertising guidelines alone and not overall community guidelines.

O’Connor said that the Youtube team uses the VRR as a North Star metric to figure out how proficient they are at keeping the platform’s users safe from problematic content. And if it is going up, then the company can try to figure out what kind of videos are slipping through their flagging system and prioritize developing their machine learning to catch the same.

All in all, it is well understood that Youtube doesn’t want to fall prey to global regulatory bodies holding them responsible for slacking on content moderation. Nonetheless, it is important to ask whether a company such as Youtube having an internal metric is any better than independent studies that look at the results in an unbiased manner.

Let us know your thoughts in the comments below. We will keep you updated on all future developments. Until then, stay tuned.